Nicole salem Portfolio

- nicolesalem4finace

- Dec 11, 2025

- 9 min read

Updated: 6 days ago

Hi, I’m Nicole Salem, an Industrial–Organizational Psychology researcher currently enrolled in the M.S. I/O Psychology program at Marian University. My academic focus is on how structured hiring, performance evaluation, and training processes affect employee performance and long-term workforce outcomes.

Before returning to graduate study, I spent more than 10 years leading Human Resources, recruiting, onboarding, and training efforts in regulated and growth-stage environments. That experience changed how I think about workforce systems. When roles are clearly defined, hiring is structured, and decisions are documented thoughtfully, teams function more predictably and fairly.

In my graduate program, I focus on evaluating hiring and training systems so they are structured, consistent, and defensible. I use that same lens in talent acquisition, training development, and organizational design work.

The projects in this portfolio demonstrate how I apply structured evaluation and system design in organizational settings. Portfolio Overview

Global Wellness and Onboarding Program Proposal: A structured workforce design proposal for a hybrid, multi-region SaaS organization. Includes implementation planning, workload safeguards, evaluation metrics, and legal compliance considerations.

Workplace Wellness Survey: A custom survey instrument designed to assess workload strain, perceived support, and engagement drivers. Built with structured response formats to support clear interpretation and defensible reporting.

Structured Interview Research Brief: An applied analysis examining structured interview implementation, rater consistency, and documentation standards. Includes discussion of behaviorally anchored rating scales and recommendations for calibration and reliability monitoring.

Legal Risk Memo: Hiring and Evaluation. A compliance-focused memo outlining adverse impact risk, ADA considerations, and structured job analysis requirements in selection systems.

Training & Performance Management Plan: A structured intervention plan aligning training needs analysis, role clarity, and evaluation metrics within a regulated environment.

Research Prospectus: Tracking Modality Study: A randomized experimental design examining digital versus paper tracking systems, including measurement strategy and statistical analysis plan.

Professional Feature: Recognition by Tradeflock, highlighting cross-functional leadership experience across finance and HR roles.

ABC Company Global Employee Wellness Program Proposal

1. Program Design

ABC Company is a global SaaS company serving quick-service and fast-casual restaurant operators through point-of-sale, analytics, and workforce technology. The organization employs approximately 50 people across the United States, the European Union, and the Philippines. Non–Customer Success teams follow a hybrid model near Salt Lake City, while international teams work fully remotely.

Because workflow depends on rapid coordination, multi-time-zone collaboration, and continuous digital communication, employees face sustained cognitive demands. This proposal introduces a structured wellness framework designed to improve predictability, support recovery, and maintain equity across regions.

The program integrates three dimensions: flexible scheduling, cognitive load reduction, and physical wellness support. Grounded in the Job Demands–Resources model (Schaufeli, 2017), the framework assumes that sustained cognitive load requires intentional resource replenishment.

Key structural elements include:

A 36-hour, four-day workweek (Monday–Thursday) for salaried non–Customer Success teams

Predictable collaboration hours (7:30 a.m.–3:00 p.m. MST)

Meeting-density safeguards

Protected focus time

Because role demands differ, scheduling policies are clearly defined by function. Engineering, product, operations, and administrative teams may design schedules within a 9:00 a.m.–5:00 p.m. window, provided collaboration hours are maintained. Customer Success operates under a coverage model from 6:00 a.m. to 10:00 p.m. MST, with structured shift-trade autonomy.

Physical wellness resources are structured for global equity. SelectHealth-covered employees receive up to $240 annually in gym reimbursement. Employees outside that coverage region receive a $240 annual wellness stipend to maintain parity. Utah hybrid employees have access to voluntary group workouts and healthy food options. Remote employees receive a $50 monthly healthy-meal stipend.

Meeting-hour limits, Quiet Hours (6:00 p.m.–7:00 a.m. local time), and the four-day structure create a predictable weekly rhythm intended to reduce ambiguity and unnecessary strain.

2. Implementation Plan

The program rollout follows a three-phase implementation model to ensure consistency across regions.

Phase 1: Foundation (Month 1): HR finalizes program documentation and global benefit equivalency structures. Managers receive training on scheduling policies, collaboration windows, ADA accommodation support, and workload monitoring.

Phase 2: Pilot (Months 2–3): A 60-day pilot is conducted with one engineering team and one Customer Success cohort. Data is gathered on meeting density, Slack usage patterns, stipend utilization, and perceptions of workload fairness.

Phase 3: Global Rollout (Month 4): Following pilot adjustments, the program launches company-wide. Communication includes policy guides distributed via HRIS, all-hands review sessions, and manager-led implementation discussions. A 30-day transition window supports adjustment without penalty.

3. Evaluation Plan

Program evaluation uses psychological, behavioral, and operational indicators.

Psychological Measures

Quarterly UWES-9 administration

Exhaustion scales

Monthly pulse interviews

Workflow Indicators

Weekly meeting-hour totals

Frequency of back-to-back meetings

Focus time access

Collaboration window adherence

Operational Indicators

Absenteeism

PTO usage

Turnover intention markers

Customer Success escalation volume

Wellness stipend utilization

Data is collected through validated surveys, HRIS reporting, digital communication analytics, and structured interviews. Monthly reviews focus on workload patterns. Quarterly reviews integrate engagement and utilization data. Biannual and annual summaries inform structural adjustments.

A cross-functional Wellness and Workload Governance Committee meets quarterly to review findings and recommend adjustments.

4. Legal and Ethical Compliance

The program aligns with ADA, HIPAA, GINA, and EEOC standards. Participation is voluntary and unrelated to performance evaluation or compensation.

ADA: Confidential accommodation processes

HIPAA: No collection of employee medical data

GINA: No genetic data collection

EEOC: Uniform application across protected groups

The program prioritizes autonomy, equity, and confidentiality across all regions.

Workplace Wellness Needs Assessment

Survey Instrument Design Sample

This survey was developed to assess perceived workload strain, organizational support, and engagement drivers within a hybrid SaaS environment. Items use a 5-point Likert scale (Strongly Disagree to Strongly Agree) and include both closed- and open-ended responses.

Sample Items

“ABC Company supports a healthy balance between my work and personal life.”

“I feel a sense of belonging and connection with my colleagues.”

“Employee input is considered before job-related decisions are made.”

“I have enough control over how I organize and complete my work tasks.”

“Employees in my work group treat one another with respect.”

Additional items assess utilization of wellness resources, perceived barriers, and priority well-being domains. Open-ended questions capture qualitative feedback on stressors and program improvements.

ABC Company Structured Interview Research and Analysis

Applied Selection StudyContext

ABC Company implemented a structured interview rubric using behaviorally anchored rating scales (BARS) for entry-level operational roles. This pilot analysis examined whether structured interviews were associated with higher inter-rater consistency compared to unstructured interviews.

Research Question

Are interviews conducted with a structured rubric associated with greater rating consistency among hiring managers?

Methods

Six hiring managers rated eight standardized, pre-recorded interview responses under structured and unstructured conditions.

Independent variable: Interview format

Outcome variable: 1–5 rating scale (≥4 threshold)

Sample included day and weekend managers

Key Findings

95.83% of structured ratings met the ≥4 threshold.

Department averages were nearly identical under structured conditions.

Unstructured ratings showed greater variability.

Qualitative feedback identified workflow strain and training gaps as potential reliability risks.

Descriptive patterns were consistent with improved inter-rater reliability under structured conditions.

Implications

Structured interviews improve rating consistency and defensibility.

Recommended next steps:

Quarterly calibration sessions

Monitoring for calibration drift and rater fatigue

Full ICC(2,k) reliability study with ≥.70 threshold

Ethics & Bias Controls

Participation voluntary; plain-language consent

De-identified, aggregated reporting

Use of behaviorally anchored rating scales to support job relevance

Suppression of small subgroup data

A) Table 1. Descriptive Statistics

Table 1 Interpretation. Structured ratings were tightly clustered and uniformly high, whereas unstructured ratings showed greater variability and lower scores. These patterns reflect research demonstrating that behavioral anchors create shared mental models that constrain subjective drift (Spector, 2021). Although a full ICC analysis is not included in this pilot, the descriptive pattern is consistent with higher interrater reliability under structured conditions.

B)POWER BI Dashboard

Figure 1. Average Overall Interview Ratings by Condition (Structured vs. Unstructured)

Figure 1 Caption: The structured condition shows markedly higher and more consistent average ratings than the unstructured condition, demonstrating how anchors guide managers toward shared interpretations of candidate performance.

C) Qualitative Mini-Summary

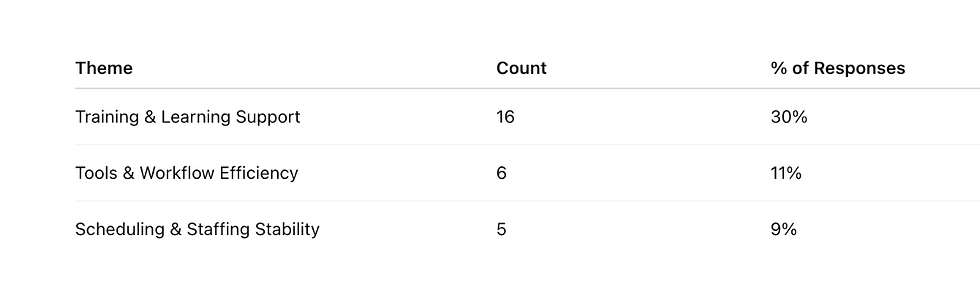

Table 2

Quotes Qualitative Themes From Manager Feedback

“I still need hands-on practice, not just visual materials.” -Billing, SLC, Utah

“The ticketing system is slow; I have to wait between screens.” -Customer support, SLC, Utah

“More predictable shifts would help me to get more sleep and focus better.” -Engineering, SLC, Utah

Table 2: Interpretation Themes reflect system-level conditions that influence cognitive bandwidth and preparation. As Venkatesh et al. (2023) note, qualitative data helps detect contextual pressures shaping human decision-making. Workflow strain, training gaps, and scheduling instability may indirectly influence how reliably managers use structured tools.

Appendix A Codebook

Memo: Preventing Legal Risk in Hiring and Evaluation

To: Human Resources Director

From: Nicole Salem

Subject: Strengthening Defensibility in Selection Systems

ABC Health Technology’s current use of blanket cognitive and personality testing, combined with unstructured interviews, creates avoidable legal and operational risk. These practices reflect a lack of formal job analysis, documented validation evidence, and structured evaluation processes. The following risks and recommendations are intended to strengthen defensibility while improving hiring consistency.

Risk 1: Assessments Used Without Job-Specific Validation

Cognitive ability tests are strong general predictors of performance (Spector, 2021), but predictive validity does not justify uniform application across all job families. Applying the same assessment across product, administrative, and patient-facing roles assumes equivalent cognitive demands without documented analysis.

If subgroup pass rates fall below the four-fifths threshold, the burden shifts to the organization to demonstrate job-relatedness and business necessity. Without documented linkage to job-specific KSAOs, that defense weakens.

Recommendation: Conduct structured job analyses for each major job family. Map every assessment directly to identified KSAOs and maintain written documentation of selection rationale. Monitor subgroup pass rates regularly to identify potential adverse impact early.

Risk 2: Subjectivity in Unstructured Interviews

Unstructured interviews introduce variability in questioning, evaluation standards, and weighting of attributes. Research consistently shows that structured interviews provide stronger reliability and validity (Spector, 2021).

Inconsistent emphasis on “culture fit” or personality similarity increases bias exposure and reduces defensibility.

Recommendation: Implement structured interviews using standardized, job-related questions tied to KSAOs. Use behaviorally anchored rating scales (BARS) and require written justification for ratings. Provide interviewer training and periodic calibration.

Risk 3: Failure to Define Essential Functions Under the ADA

Hiring decisions must be based on essential job functions. When assessments measure characteristics unrelated to essential duties, qualified applicants may be unnecessarily screened out

.

Recommendation: Clearly distinguish essential versus marginal functions during job analysis. Ensure assessments measure only required competencies. Establish a transparent accommodation process for testing modifications.

Conclusion

Legal risk in hiring does not arise from the use of assessments or interviews. It arises when those tools are implemented without structured alignment to job requirements and documentation standards. Grounding selection decisions in formal job analysis, structured evaluation, and clearly defined essential functions strengthens both defensibility and hiring precision.

Training Evaluation & Performance Improvement Plan

Applied Learning & Development Intervention Context

This project focuses on a Learning & Development (L&D) Manager within a 300-person healthcare organization. The role is responsible for identifying training needs, designing instructional programs, and evaluating effectiveness. Gaps in training design and evaluation capability can directly affect frontline healthcare performance and compliance outcomes.

The intervention targeted improvements in structured needs analysis, objective alignment, and evaluation design.

Training Goals

1. Strengthen Training Needs Diagnosis (30 days): The L&D Manager will conduct structured needs assessments using organization, job, and person-level data and document whether training is warranted, including targeted KSAOs.

2. Improve Objective Alignment (45 days): The L&D Manager will develop training objectives that clearly distinguish between learning criteria and performance criteria, including explicit standards for success.

3. Build Evaluation Capability (60 days): The L&D Manager will design a training evaluation plan incorporating at least one learning metric and one behavior or results-based metric.

Training Approach

The intervention will consist of a two-week targeted development program (6–8 hours total) that combines brief eLearning modules with applied exercises.

Content focused on:

Structured needs analysis

KSAO identification

Differentiation between training-level and performance-level criteria

Training transfer principles

Applied exercises required the L&D Manager to diagnose real organizational scenarios, draft aligned objectives, and design evaluation criteria. Facilitation was provided by a senior HR or organizational development leader.

Evaluation Design

Training-Level Evaluation: A post-training assessment measured understanding of needs analysis and evaluation principles. An 80 percent proficiency threshold indicated successful learning.

Performance-Level Evaluation: Within 60 days, the L&D Manager submitted a revised frontline training plan. Plans are evaluated using a rubric assessing alignment among:

Identified needs

Training objectives

Performance criteria

A simple pretest–posttest comparison assessed structural improvement in training plan quality.

Theoretical Foundation

Grounded in training transfer research (Baldwin & Ford, 1988), Kirkpatrick’s evaluation framework (1977), and structured training principles (Spector, 2021).

Ongoing Graduate Research

Tracking Modality and Self-Regulatory Awareness

I am currently developing my thesis study examining how structured tracking systems influence perceived self-regulatory awareness and adherence during recovery from temporary health-related performance disruptions.

The study uses a randomized experimental design and has received IRB approval as minimal-risk research. It focuses on structured behavioral tracking, measurement validity, and the practical implications for return-to-work and return-to-learn systems in healthcare-adjacent settings.

My research draws from occupational health psychology and structured decision frameworks, with close attention to compliance standards and participant protections. Full methodology and findings will be available following proposal defense and data collection.

Featured tradeflock article

References

Adamska-Chudzińska, M., & Pawlak, J. (Eds.). (2025). Work engagement and employee well-being: Psychosocial support to build engaged human capital. Routledge.

Krzyżak, J., & Walas-Trębacz, J. (2025). Application of the SCARF model in building work engagement. In Supportive organizational culture (pp. xx–xx). Routledge. https://doi.org/10.4324/9781032715407-5

Schaufeli, W. B. (2017). Applying the Job Demands–Resources model: A ‘how to’ guide to measuring and tackling work engagement and burnout. Organizational Dynamics, 46(2), 120–132.

Szydło, R., Tyrańska, M., Wiśniewski, K., Rynduch, I., & Adamczyk-Kowalczuk, M. (2025). Organizational culture and climate that shape engagement. In Work engagement and employee well-being (pp. xx–xx). Routledge. https://doi.org/10.4324/9781032715407-4

U.S. Department of Health & Human Services. (2023). Health Insurance Portability and Accountability Act of 1996 (HIPAA).

U.S. Equal Employment Opportunity Commission. (n.d.). Genetic Information Nondiscrimination Act (GINA).

Spector, P. E. (2021). Industrial and organizational psychology: Research and practice (8th ed.). Wiley.

Venkatesh, V., Brown, S. A., & Sullivan, Y. W. (2023). Conducting mixed-methods research: From classical social science to the age of big data and analytics. Virginia Tech Publishing.

Comments